Access rules API now returns attribute mappings

The Access Rules V2 API now includes amappings field in every access rule response. This field lists the Rosetta Stone attributes that are mapped to columns selected by the rule, along with the specific attribute properties exposed. Mappings are filtered by the caller’s attribute viewing permissions, so each organization sees only the attributes they have access to. Read more →Run data plane health checks from the dashboard

You can now trigger a data plane health check directly from the Data Planes list page and the Jobs page. On the Data Planes list, each row’s action menu includes a new Run health check option. The Jobs page header adds a dedicated action button that enqueues a health check against the currently selected data plane. Both entry points provide toast feedback with the enqueued job ID so you can track progress.Exchange token endpoint for switching company scope

Added a newPOST /authentication/exchange-token endpoint that lets an authenticated user exchange their current access token for a new one scoped to a different company they have access to. The request accepts a companyId and returns a fresh login response (access token, legacy token, and session cookies) for the target company, making it easier to build multi-company workflows without re-authenticating.The /whoami response now also includes an accessibleCompanies field listing every company the caller can switch into, so applications can discover valid targets for exchange-token without a separate lookup.Granular DML row statistics

DML job results now include a detailed breakdown of row-level changes. In addition to the existingaffected_rows count, the Jobs API response now returns inserted_rows, updated_rows, and deleted_rows for each completed DML operation. Materialized view refreshes also report these granular row statistics when available, giving you clearer visibility into exactly what changed during each data operation.The Jobs API response also includes a new operator_type field that indicates the underlying execution type used by the data plane, decoupled from the user-facing job type.NQL ARRAY_POSITION function

NQL now supports the ARRAY_POSITION function, which returns the zero-based index of the first occurrence of an element in an array. If the element is not found, or if the search value isNULL, the function returns NULL:Tab overflow menu and company switch cleanup

The tab bar now includes an overflow menu that appears after your open tabs, giving you quick access to Close all tabs and Close other tabs actions. The menu is hidden when only the Home tab is open, keeping the interface clean.Additionally, switching between companies now automatically clears your open tabs and navigates you to the home page. Previously, switching companies would reload the current URL in the new company context, which could display tabs referencing records from the previous company.View datasets

You can now create view datasets by passingcreate_as_view: true in your NQL CREATE MATERIALIZED VIEW requests. A view dataset acts as a logical layer over your existing data — instead of materializing and storing a full copy, it defines a reusable query that references source datasets directly.View datasets are ideal for creating curated, read-only perspectives on your data without duplicating storage. When a view dataset is referenced in a query, its underlying NQL definition is automatically expanded and resolved inline.Notable behavior for view datasets:- No connections — view datasets cannot have connectors attached, since they don’t store data independently

- No access rules — access rules cannot be created on top of view datasets

- No billing — queries that create view datasets are not billed, as no data is physically materialized

- No forecasting — row-count forecasts are skipped for view dataset queries

- Compatible NQL subset — view datasets do not support

MERGE,DELTA,PARTITION BY, or chunking strategies

App loading screen

The platform now displays a branded loading screen while your workspace initializes. Instead of seeing a blank page or partially loaded interface, you get a smooth visual transition with an animated progress indicator as data planes, installed apps, and configuration load in the background. The loading screen also reappears naturally when switching between companies, providing a consistent experience during context changes.Classifier Studio

You can now build and train classifiers directly from the platform with the new Classifier Studio, available under My Models alongside LLM Studio and Prompt Studio. A guided builder walks you from dataset selection to a running training job without leaving the UI:- Dataset & label selection — pick a dataset in your current data plane and choose the label column from its primitive-typed columns (

string,boolean,double,long,timestamptz). - Feature configuration — select dataset columns as features and assign a feature type (text, categorical, numeric, count vectorizer, or embedding); the builder suggests types based on column schema and exposes per-type parameters with sensible defaults.

- Algorithm configuration — choose between Logistic Regression and Random Forest, tune algorithm-specific hyperparameters, and configure your test/train split (test size, random state, stratification).

- Finalize — name and version your model, add tags, confirm the data plane, and review the full configuration summary before kicking off training.

Dataset connector compatibility check

You can now check whether a dataset’s schema is compatible with installed connector interfaces using the new dataset compatibility API. The endpoint validates your dataset schema against all connector interfaces available through your installed apps, returning a list of compatible and incompatible interfaces with detailed validation errors.Filter results by interface tags to quickly find connectors relevant to your use case. This helps you understand which connectors can work with your data before setting up a delivery, reducing trial-and-error when configuring data pipelines.Dataset statistics now include Rosetta Stone attributes

Dataset statistics now include Rosetta Stone normalized attributes alongside native columns — cardinality, distributions, completeness, and approximate distinct counts on normalized values are visible in the same statistics view. Available on Snowflake data planes today, with AWS support coming soon. Read more →Histograms are now off by default for new datasets, with a limit of 16 histograms per dataset. New Snowflake datasets automatically receive a default statistics configuration, and historical statistics are now stored for trend analysis. Snowflake only — AWS support is in development.Edge Builder improvements

The Edge Builder now supports using individual leaf properties from object-mapped attributes as target IDs — select specific properties likevalue, full_name, or city from complex attributes such as hashed identifiers or addresses. A new edit mode lets you modify existing graph edge mappings without starting over: update source IDs, add or remove target ID groups, and review a summary of pending changes before applying.Data plane health checks

A newPOST /data-planes/{id}/health-check endpoint validates that your data plane infrastructure is correctly configured. For AWS data planes, this includes verifying cross-account trust relationships and IAM permissions. When a health check detects that a compute pool’s backing resource no longer exists, the system automatically archives the pool and re-elects a new default.Jobs API idempotency

Terminal state transitions in the Jobs API are now idempotent — completing, failing, or cancelling a job that is already in a terminal state returns a successful response instead of an error.Bug fixes

- Fixed Rosetta AI chat and chat message views not scrolling when conversations exceeded the visible area

- The dataset statistics refresh button now updates record count and size alongside column statistics

- The statistics API no longer fails when a Rosetta Stone attribute’s type references another attribute by ID

valueis no longer treated as a reserved word in NQL, fixing mapping expressions likeid.value

Week of March 30, 2026

Audience StudioBreaking ChangeBug FixComposable IdentityDashboardData StudioImprovementIntegrationsNQLNew FeatureWorkflow DSLWorkflows

Graph Studio

Graph Studio is now available in the platform UI, bringing identity graph building to all users. Build identity graphs by selecting source datasets, configuring algorithm parameters like max component size and degree thresholds, and materializing unified edge datasets and connected-components graph tables — all from a guided, step-by-step builder. Supports Snowflake data planes today, with AWS support coming soon. Read more →PubMatic connector

You can now deliver audiences to PubMatic directly from the platform. Create delivery profiles with your data provider ID, then connect datasets to push audience segments. Supported identifiers include MAID, Android Advertising ID, Apple IDFA, SHA256 hashed email, and hashed email. Read more →Facebook Connector improvements

You can now create Facebook Connector profiles using a personal user access token in addition to system user tokens. A profile type selector lets you choose the right method for your setup, and existing user access token profiles can be re-authenticated in place. Profiles with expired or invalid tokens are also now visually flagged with a red “Invalid Token” badge in the profile list, making it easy to identify and fix connectivity issues before they affect delivery.Workflows management pages

You can now view and manage workflows directly in the Dashboard under My Data Planes. The new list page shows all workflows with status filters, archive and trigger actions, and the associated data plane. Selecting a workflow opens a detail page with the full YAML definition and run history. API access tokens can also now be scoped to views and workflows resources. Read more →CreateMaterializedViewIfNotExists now uses full NQL syntax

The CreateMaterializedViewIfNotExists workflow task no longer requires a separate datasetName parameter. Instead, you now provide the complete CREATE MATERIALIZED VIEW statement directly in the nql field.This change simplifies the task interface and gives you full control over the materialized view definition in a single NQL expression.Before:CreateMaterializedViewIfNotExists, update them to move the dataset name into the nql field using the CREATE MATERIALIZED VIEW <name> AS ... syntax. The datasetName parameter is no longer accepted.Additionally, archived datasets are now correctly excluded when checking whether a materialized view already exists, so re-creating a view after archiving the original dataset works as expected.Audience Studio improvements

Audience Studio received several updates this week. You can now clone an existing audience by selecting Open in Audience Studio from any audience’s action menu, hydrating the builder with the original source, filters, connectors, and metadata. A new maximum audience size setting in the finalize step caps audiences using deterministic sampling, and the cap persists when cloning. The filter attribute dropdown now groups options into Rosetta Stone attributes and Dataset Columns for clarity, and suggested filter percentages now reflect overlap with your forecasted audience rather than static dataset-level statistics.Improved keyboard navigation for help pages

Help page accordions now scroll smoothly into view when expanded, and code block action buttons are accessible via keyboard focus — not just mouse hover.NQL ARRAY_SORT function

NQL now supports the ARRAY_SORT function for sorting array elements in ascending order. You can use it to sort arrays of strings, numbers, or other comparable types directly within your queries:NQL lambda functions now support struct field access

Fixed an issue whereTRANSFORM and FILTER lambda functions would fail on arrays of structs. Queries like TRANSFORM(items, x -> x."name") now work correctly. Read more →NQL array field access permission fix

Fixed an issue where accessing properties within array elements (e.g.,ids[1].idValue) could incorrectly evaluate column-level access permissions.Null value handling in filters

Fixed “NullValue” appearing as a selectable literal in filter dropdowns. Audience Studio now provides proper Is null / Is not null options that generate correct NQL predicates.Data Studio tab fixes

Fixed incorrect tab titles after navigation and re-opening a closed Data Studio tab now properly creates a new session.Yahoo DSP connector fix

Fixed Yahoo DSP audience connections failing due to a missing payload type in the connector’s quick settings.Audience sources filtered by data plane

Fixed the audience source picker showing datasets from all data planes instead of only the currently selected one.Week of March 23, 2026

APIAudience StudioBreaking ChangeBug FixDashboardData StudioImprovementIntegrationsNQLNew FeaturePlatformRosetta StoneWorkflows

Pinterest Connector

The Pinterest Connector is now fully featured with support for app invites and profile reconnection. You can create, view, and delete invite links directly from the new App Invites tab — each generating a unique, shareable URL for partner onboarding. If a profile’s OAuth token expires, you can now refresh it in place from the profile details page without recreating the profile, and generate reconnect invites to let partners re-authorize on their own. Read more →Rosetta Stone mappings are now global by default

All new Rosetta Stone mappings created via the API are now global immediately upon creation. Previously, user-created mappings were private and required a separate promotion step. Existing private mappings remain unchanged. If you use the TypeScript SDK’screatePrivateMapping method, be aware that it now creates global mappings despite its name — a future SDK release will rename this method for clarity. Read more →S3 Connector delivers commit files on completion

The S3 Connector now supports writing a_NIO_COMMIT file upon successful data delivery. Enable the new write_narrative_commit_file QuickSettings flag on any S3 connection to have the connector automatically write a commit marker after all data files land. This enables fully automated downstream ingestion pipelines — including Snowflake Secure Share ingestion to AWS data planes — without manual intervention.Audience Builder supports The Trade Desk

The Audience Builder now supports inline configuration when sending audiences to The Trade Desk — set the audience name, select advertisers, and control historical data delivery directly from the builder without navigating to a separate configuration page. Read more →Dataset retention policies configuration UI

You can now configure data retention policies directly from the dataset detail page. The new Retention tab lets you create, edit, enable, and delete TTL-based policies — including Row TTL (Snowflake), Snapshot TTL (AWS), and Table TTL (cross-platform). You can also use Ask Rosetta to describe a policy in plain language and have it configured automatically. Read more →Dataset statistics configuration UI

You can now configure dataset statistics directly from the Dashboard. The new Statistics Configuration option in the dataset actions menu lets you set default stat types, add per-namespace field overrides, tune histogram options, and schedule automatic refreshes — all without writing API calls. Read more →Compute pool improvements

The compute pools API now exposes compute pool IDs on jobs and datasets, giving you better visibility into which resources ran each workload. The Snowflake Warehouse provider also supports an optionalwarehouse_name field for direct control over which warehouse handles your workloads. Read more →App Invites installation filtering

The App Invites API now supports an optionalinstallation_id query parameter for filtering invites to a specific installation. Archived invites are also now automatically excluded from list results.NQL FILTER and TRANSFORM functions

NQL now supports FILTER and TRANSFORM lambda functions for working with arrays.FILTER keeps only the elements that match a condition, while TRANSFORM applies an expression to each element and returns the results. Both functions use arrow syntax (element -> expression) and support struct field access, so you can write queries like:Budgeted merge deduplication fix

Fixed an issue where budgeted merges could produce inconsistent deduplication results by replacing the internal sampling function with a deterministic hash-based approach.Workflow archive reliability fix

Fixed a race condition during workflow archiving that could leave behind orphaned schedules or running executions.Jobs API returns 409 for completed jobs

The Jobs API now returns409 Conflict instead of 400 Bad Request when attempting to run, fail, or complete an already-completed job.Pinterest ad accounts display fix

Fixed an issue where Pinterest ad accounts were not loading in the connector configuration due to an incorrect API response path.Taxonomy dialog layout fix

Fixed controls being hidden and content overflow clipping in taxonomy creation dialogs.Week of March 16, 2026

APIAudience StudioBreaking ChangeBug FixComposable IdentityDashboardData StudioImprovementNew FeaturePlatformWorkflow DSLWorkflows

App Invites API

Applications can now create and manage invite links through the new App Invites API. Each invite generates a unique, shareable URL that can be sent to prospective users or partners — enabling a seamless onboarding experience directly from an app’s landing page.Key capabilities include:- Create invites with optional display names, invitee names, tags, and custom JSON data payloads

- Public code lookup — invitees can resolve an invite link without authentication, returning app and company details for a branded landing-page experience

- Lifecycle management — transition invites through

pending,active, andarchivedstates, with soft-delete semantics that preserve audit trails - Scoped access — invite visibility is automatically scoped to the caller’s app or company context

Audience Studio Improvements

Several enhancements to the Audience Studio builder:- Frequency filtering — Apply frequency constraints to audience filters (e.g., “users who performed an action at least N times”) for more precise audience segmentation

- Suggested filters — The builder now displays an audience overview summary bar with AI-suggested filter values based on dataset statistics, helping you discover impactful ways to segment your audience

- Auto-calculate toggle — Choose between automatic audience size estimation that recalculates on every filter change, or switch to manual calculation for more control during complex audience builds

- UI refinements — Clearer section labels (“Sources” and “Audience details”), compact action menus, and grouped connector eligibility with explanatory context

Boolean attribute property toggles

Boolean schema properties such as Queryable, Required, and Sensitive now display as toggle switches in the dataset schema quickview panel. Previously these values were edited through a text-based inline flow; the new toggle switches make it faster and more intuitive to flip a property on or off without additional clicks.Dataset Statistics Configuration API

A new API lets you configure automated statistics computation for your datasets. Define which statistics to calculate — value counts, distinct counts, histograms, mean, standard deviation, and more — at the dataset level, per-field, or per Rosetta Stone attribute. Statistics can refresh on a cron schedule, on every data update, or on demand. The configuration supports recursive nesting for complex schemas, with an inheritance model that flows from global defaults through namespace scopes down to individual field overrides.Enhancements and Bug Fixes

Opt-out Bloom Filter Fix: Resolved an issue where opt-out bloom filters could become saturated, improving the accuracy of compliance filtering for large datasets. Dataset Rewrite Provenance Fix: Fixed a bug where dataset rewrites (compaction or schema migrations) would incorrectly drop the_nio_sources provenance column, permanently losing lineage metadata such as access rule IDs, pricing, and licensing terms for affected rows. Provenance data is now preserved through rewrites.

Yahoo DSP Connector Icon: Fixed an issue where the Yahoo DSP Connector icon was not rendering correctly due to a property naming mismatch.

Improved Scroll Behavior: Pages throughout the platform now use contained scrolling within each section and tab panel, rather than scrolling the entire page. This keeps navigation and headers visible while you scroll through long content in datasets, models, studios, and settings pages. The sidebar also scrolls independently from page content.

Cleaner Detail Page Layouts: Dataset and model detail pages no longer display redundant section headers within tab panels, reducing visual clutter and reclaiming vertical space.Model Inference Billing

Model inference jobs are now tracked in the billing log. When a model inference job completes, a billable event is recorded automatically, giving you full visibility into inference usage and costs alongside your other platform charges.NQL Toast Notifications

Validate and execute actions in the Data Studio now display toast notifications, so you receive feedback regardless of your scroll position. This replaces the previous inline message bar that could be missed when working in longer queries.Redesigned help tabs across studios

The help tabs in Data Studio, LLM Studio, and Prompt Studio now use collapsible accordion sections, making it easier to find the guidance you need without scrolling through a single long document. Each section expands independently, with the overview open by default.Data Studio’s help tab also includes expanded coverage of connectors, Rosetta AI, cost control, and materialized views, with syntax-highlighted code examples you can copy directly into your queries.Workflow DSL: New tasks and improvements

Two new tasks are available in the Workflow DSL:CreateRosettaStoneMappingsIfNotExist— Create Rosetta Stone attribute mappings for a dataset directly from a workflow. Define one or more mappings that bind Rosetta Stone attributes to dataset columns via NQL expressions. Existing identical mappings are skipped automatically (idempotent), and partial failures can be allowed so one bad mapping doesn’t block the rest.RunModelInference— Run hosted LLM inference jobs from a workflow. Send messages to supported AI models (Claude, GPT, o4-mini) and receive structured JSON output conforming to a schema you define. Useful for classification, enrichment, and transformation steps in automated pipelines.

Breaking change: camelCase output field names

All workflow task output field names now use camelCase instead of snake_case. If you have existing workflows that reference output fields inexport.as expressions or ${…} interpolations, update them accordingly:| Before | After |

|---|---|

.dataset_id | .datasetId |

.snapshot_id | .snapshotId |

.recalculation_id | .recalculationId |

.affected_rows | .affectedRows |

.structured_output | .structuredOutput |

Week of March 9, 2026

Audience StudioBug FixComposable IdentityImprovementIntegrationsNew FeaturePlatform

Audience Studio Builder Enhancements

Audience Studio now includes a Start Over action that resets the builder state and cancels any in-flight forecast requests, making it easy to begin fresh without navigating away. The finalize view has been redesigned with an updated layout, and connector eligibility is now clearly indicated — ineligible connectors are disabled with explanatory context. Display name and description fields now support AI-assisted autofill, and audience unique names are now auto-generated from the display name.Bug Fixes

Fixed an issue where opt-out bloom filters could become saturated on large datasets, potentially causing every record to be incorrectly marked as opted out. The bloom filter now sizes itself based on estimated row counts, improving accuracy of privacy opt-out enforcement.Pinterest Audience Delivery

The Pinterest Connector now supports audience delivery, enabling you to push audience segments directly to Pinterest for ad targeting. Connect your Pinterest account, select your ad accounts, and deliver audiences built from hashed emails, phone numbers, or mobile advertising IDs.Yahoo DSP Multi-Profile Support

The Yahoo DSP Connector now supports multiple profiles, allowing you to manage separate Yahoo DSP configurations within a single Narrative account. Switch between profiles when managing taxonomies, advertisers, opt-outs, and partner match settings.Bug Fixes

Webhook delivery reliability: Resolved an issue where webhook deliveries could become permanently stuck in a “delivering” status when non-HTTP errors (such as network timeouts or DNS failures) occurred. Webhook events now properly retry on all error types and are marked as failed after exhausting retry attempts, preventing silent delivery stalls. Budget calculation accuracy: Fixed a budget enforcement issue where the partition window function used a strict less-than comparison instead of less-than-or-equal, which could cause queries to slightly under-deliver relative to the specified budget. UID2 resolution reliability: Added automatic retries with exponential backoff and timeouts to UID2 identity resolution requests, improving reliability when the UID2 service experiences transient errors.Custom Attributes UI

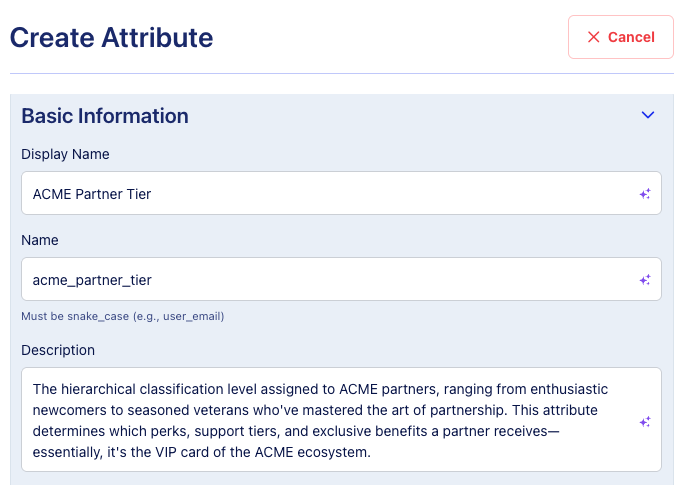

The Rosetta Stone Attributes page now includes a Create Attribute button, making it easy to define your own custom attributes directly from the UI. Custom attribute creation has been available via the API, and now you can do it without writing any code.- Rosetta Stone can auto-generate the display name, name, and description for you using AI-assisted fields

- Custom attributes are private to your company by default, with the option to share more broadly

- View documentation →

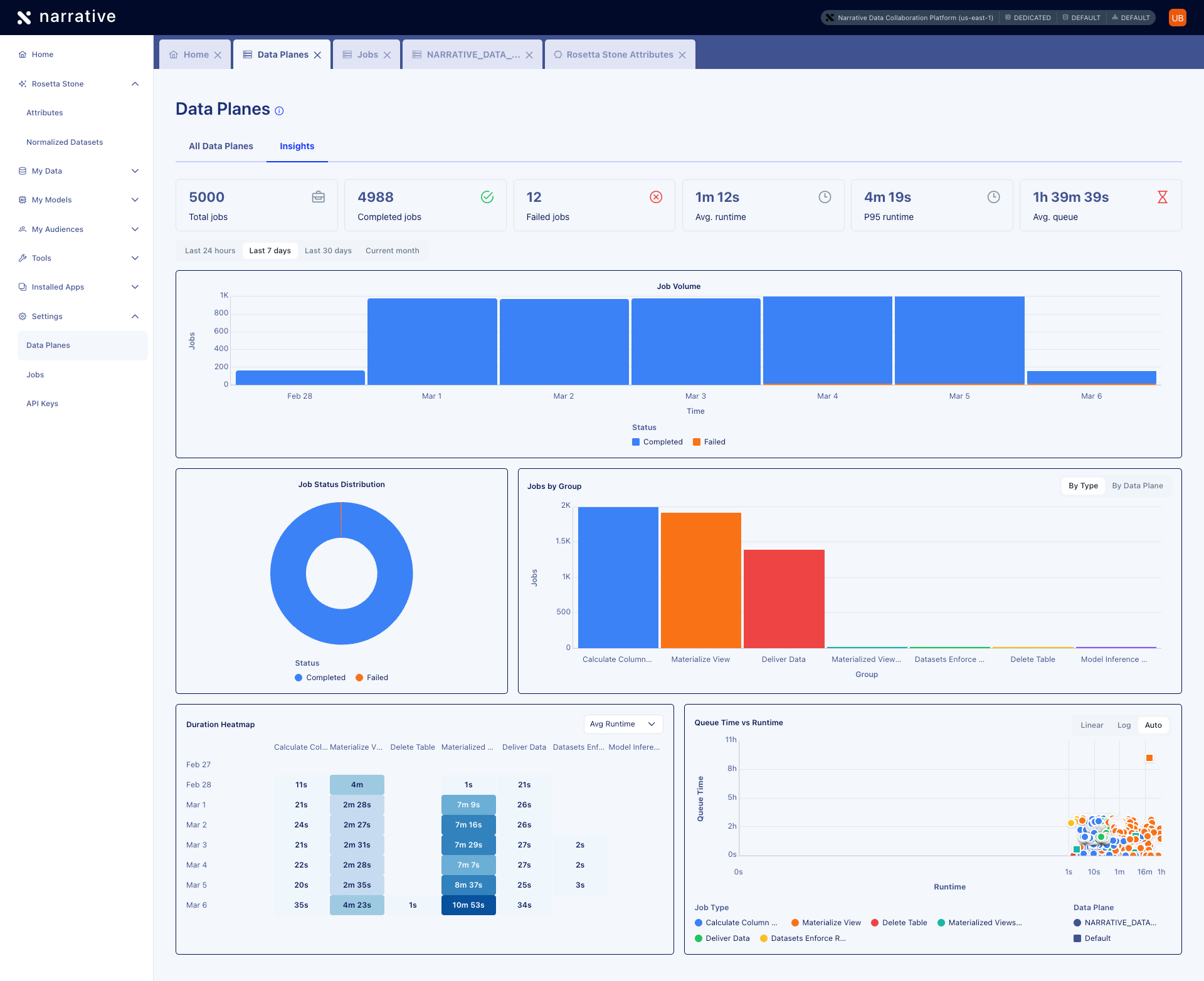

Data Planes Updates

The Settings → Data Planes page has been refreshed with an updated list view and a new Insights tab. The list view now includes sortable columns for ID, display name, unique name, and creation date. The Insights tab provides at-a-glance operational metrics — total jobs, completed and failed counts, average runtime, P95 runtime, and average queue time — along with visualizations for job volume, status distribution, jobs by group, a duration heatmap, and a queue time vs. runtime scatter plot.

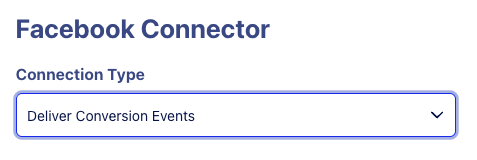

Meta Conversions API Connector

You can now send conversion events — purchases, leads, checkouts, and more — directly to Meta’s Conversions API for improved ad attribution and campaign optimization on Facebook and Instagram.- Supports offline, online, and app conversion events

- Map your conversion data to the

meta_conversion_eventRosetta Stone attribute for automatic transformation, hashing, and delivery - PII fields are SHA-256 hashed before transmission — raw customer data is never sent to Meta

- View documentation →

Workflow DSL Variables and Expressions

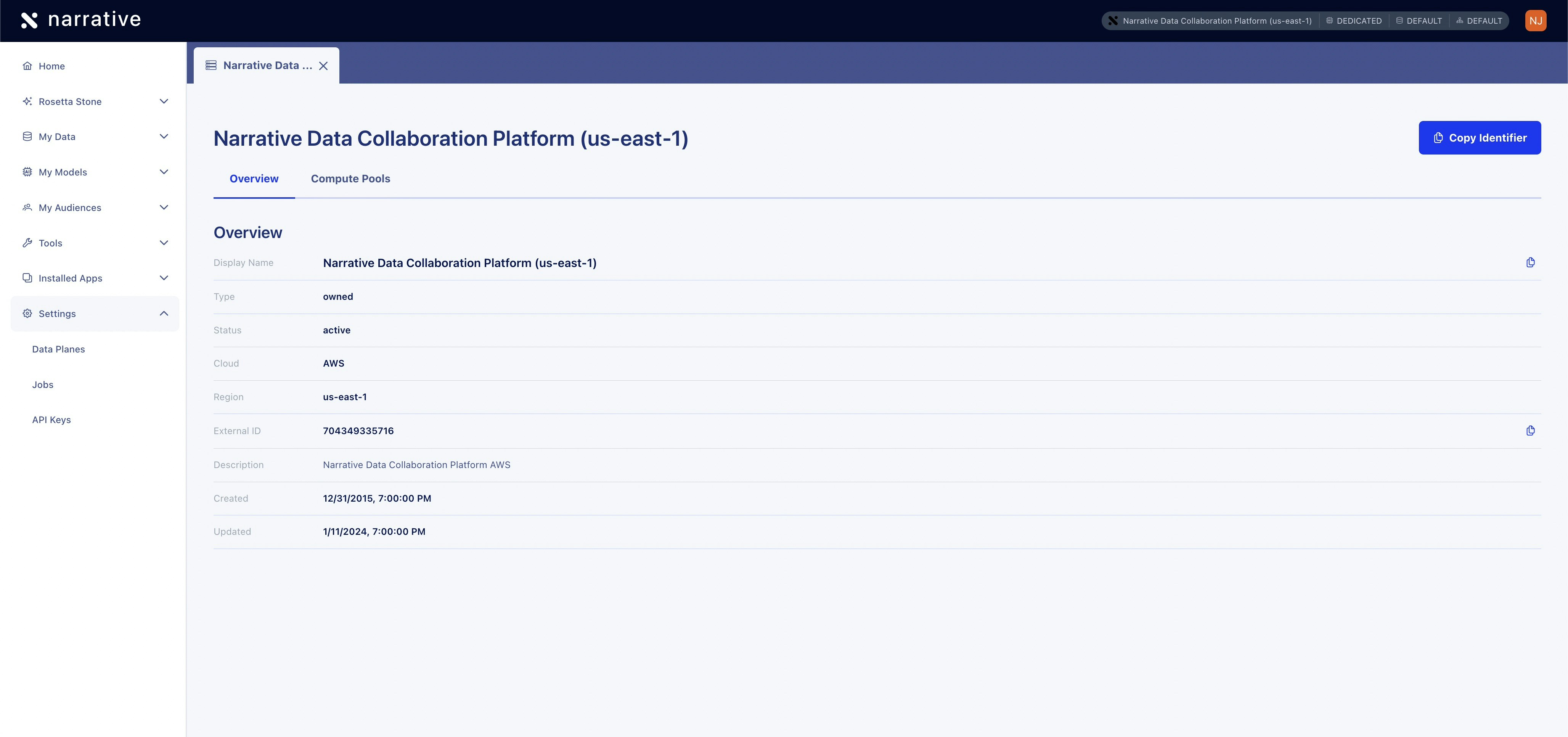

The serverless workflow DSL now supports variables and expressions for passing data between workflow steps. Useexport.as to capture task outputs into a workflow context with jq expressions, reference previous results using ${...} variable syntax, and embed computed values into strings with jq interpolation. This enables dynamic, data-driven workflows where each step can build on the results of previous steps.Data Plane Details Page

You can now click into any data plane from Settings > Data Planes to view its details. The details page includes an Overview tab showing configuration like display name, type, status, cloud provider, and region, along with a Compute Pools tab listing all compute pools assigned to that data plane.

Enhancements and Bug Fixes

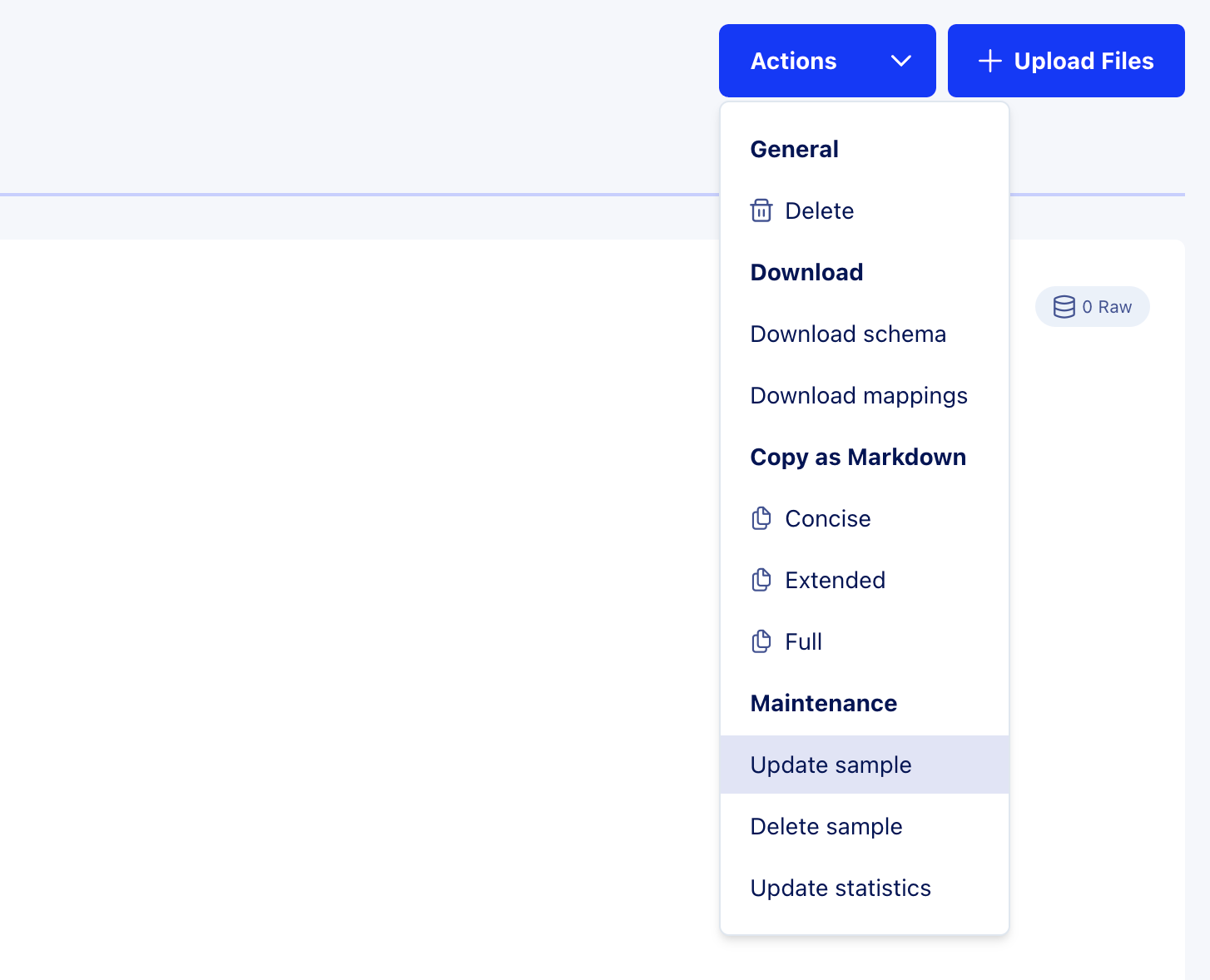

Secret Sharing UI: The Secret Sharing interface has been updated with a refreshed design that matches the look and feel of the rest of the platform. View documentation →Update Sample Action

You can now request data samples directly from the UI on all supported data planes. Open a dataset, click the Actions dropdown, and select Update sample to trigger a sampling job.

- Dataset fields — Raw columns inherited from the dataset schema. These are always present, even when all values are empty.

- Rosetta Stone attributes — Normalized attribute properties promoted to top-level columns for convenience. For example, the

longitudeproperty of alocationattribute (queried as_rosetta_stone.location.longitudein NQL) appears as a top-levellongitudecolumn in the sample viewer.

_) are also excluded from the sample view.This release note focuses on the UI experience. For programmatic access, see the Sample Data API reference.Workflow Data Passing

Workflows now support structured data passing between tasks. Instead of coordinating tasks purely by name-based dataset references, you can capture task output and inject it into downstream task parameters using a shared workflow context.- Task output — Each task produces structured JSON (dataset IDs, snapshot IDs, row counts) accessible to subsequent tasks

- Export — Use

export.aswith jq expressions to merge task output into a workflow-wide$contextthat accumulates as tasks run - Variable expressions — Use

${…}syntax in task parameters to inject values from the previous task’s output or$context

Sample Data

Dataset sampling now includes Rosetta Stone normalized attributes alongside your raw data columns, giving you full visibility into how your data transforms through Rosetta Stone mappings directly in the sample viewer.- Hover over any dataset field with computed statistics to see detailed information including value distributions and null rates

- View Rosetta Stone attribute values alongside your source columns in dataset samples

- Dataset sampling on the Narrative AWS US-East-1 Shared data plane now runs as a background job, matching the Snowflake data plane experience—samples can be requested and gathered for any dataset

- View documentation →

A dedicated action button to trigger sampling on AWS data planes from the UI is coming soon. Samples can currently be requested via the API and SDK.

Context Selector

Set your execution context across four dimensions with the new context selector in the top navigation. This segmented pill button replaces the previous “Data Plane” dropdown and gives you control over where your queries execute and what data is visible.- Switch between data planes to change which objects are visible throughout the platform

- Choose compute pools to control whether queries use dedicated, shared, or default resources

- Set database and schema scope for your current session

- Context is saved per-browser and persists across sessions

- View documentation →

Enhancements and Bug Fixes

OBJECT_REMOVE_NULLS Cross-Platform Support: TheOBJECT_REMOVE_NULLS function is now accepted on AWS hosted data planes as a no-op, so NQL queries that use it on Snowflake don’t need to be rewritten for AWS.Enhancements and Bug Fixes

File Uploads with Special Characters: You can now upload files with special characters in their names—such as square brackets, spaces, and other non-alphanumeric characters—during dataset creation. Previously, these file names would cause the upload to fail, requiring you to rename files before uploading.FORMAT_TIMESTAMP Function

Format timestamps into custom string representations with the newFORMAT_TIMESTAMP NQL function. Convert timestamp columns to date-only strings, time-only strings, or ISO 8601 formats with or without timezone information.- Supports standard date/time format patterns (YYYY, MM, DD, HH24, MI, SS, TZH, TZM)

- Works across both Snowflake and AWS hosted data planes

- View documentation →

Workflow Orchestration

Automate multi-step data pipelines that execute in a defined sequence based on task dependencies. Define your workflow once using the Serverless Workflow DSL (YAML format) and let the system handle orchestration—no manual triggering required.- Create materialized views, refresh them, and run DML operations in automated sequences

- Schedule workflows with cron expressions for recurring execution

- Sequential task execution with fail-fast error handling

- Three built-in tasks:

CreateMaterializedViewIfNotExists,RefreshMaterializedView,ExecuteDml - View documentation →

Workflows can be created and edited via the API. You can view, trigger, and archive workflows from the Workflows UI under Settings > Workflows.

Enhancements and Bug Fixes

AI-Generated Dataset Descriptions: Click the Rosetta icon next to any dataset description to automatically generate a rich, contextual description. Rosetta AI analyzes your dataset’s schema and sample data to create descriptions that explain what the data is and where it came from—saving you from writing documentation from scratch.Simplified Refresh Schedule Editor: We removed the confusing “Advanced” mode from the refresh schedule editor based on user feedback. Need a custom cron schedule? Just describe it in plain language and Rosetta AI generates the correct cron expression for you.Rosetta Stone Normalized Datasets Page

Evaluate and improve your data normalization with full visibility into Rosetta Stone’s AI-powered mapping confidence scores. The new Normalized Datasets view (Rosetta Stone → Normalized Datasets) surfaces how well your datasets map to Rosetta Stone attributes, helping you quickly identify which mappings need attention.- View all normalized datasets with color-coded confidence scores (high/medium/low)

- See mapping type indicators showing AI-generated vs. manual mappings

- Sort by columns, rows, confidence, or creation date

- Filter by data plane to focus on specific environments

- Search datasets by name

TikTok Audience Connector

Deliver first-party audiences directly to TikTok Ad Accounts within your Business Center through Narrative’s new TikTok Connector.- Secure OAuth 2.0 authentication with TikTok Business Center

- Support for SHA256 hashed emails, phone numbers, and mobile advertising IDs (IDFA/GAID)

- Create new audiences or update existing TikTok Custom Audiences

- Distribute audiences across multiple Ad Accounts

- Available in Audience Studio and My Data → Datasets → Connections tab

Previous Release Notes

Q4 2025

October – December 2025